News

Anthropic is getting sued?

We previously reported how Anthropic had avoided legal repercussions for their training data because they trained on books that they had bought.

It turns out that they also had pirated a large number of books as well, around 7 million, and are now facing potentially massive fines for doing so. The judge has already determined that copyright infringement has taken place, and so now all there is left is to assess damages, which, under current statutory minimum, would be $750 per book, up to $150,000 worst case. This means that on the low end, Anthropic will over a billion dollars, and in the worst case could get $750 billion in fines, but no jury would actually award that much in damages.

Previously Anthropic CEO Dario Amodei had said they would not be receiving any funding from Gulf States, like the UAE and Saudi Arabia, unlike OpenAI had just done so to finance their new half trillion dollar Stargate data center project. It seems that this potentially massive fine has changed his mind, as in a leaked memo he has backpedaled, saying that

”Unfortunately, I think ‘No bad person should ever benefit from our success’ is a pretty difficult principle to run a business on” — Dario Amodei, leaked memo

This will probably be settled out of court, but even so, it will be a big blow to Anthropic. They are also unlucky to be the first to come under scrutiny, as pretty much all major labs do the same, and platforms like Anna’s Archive actively offering datasets to LLM trainers.

Releases

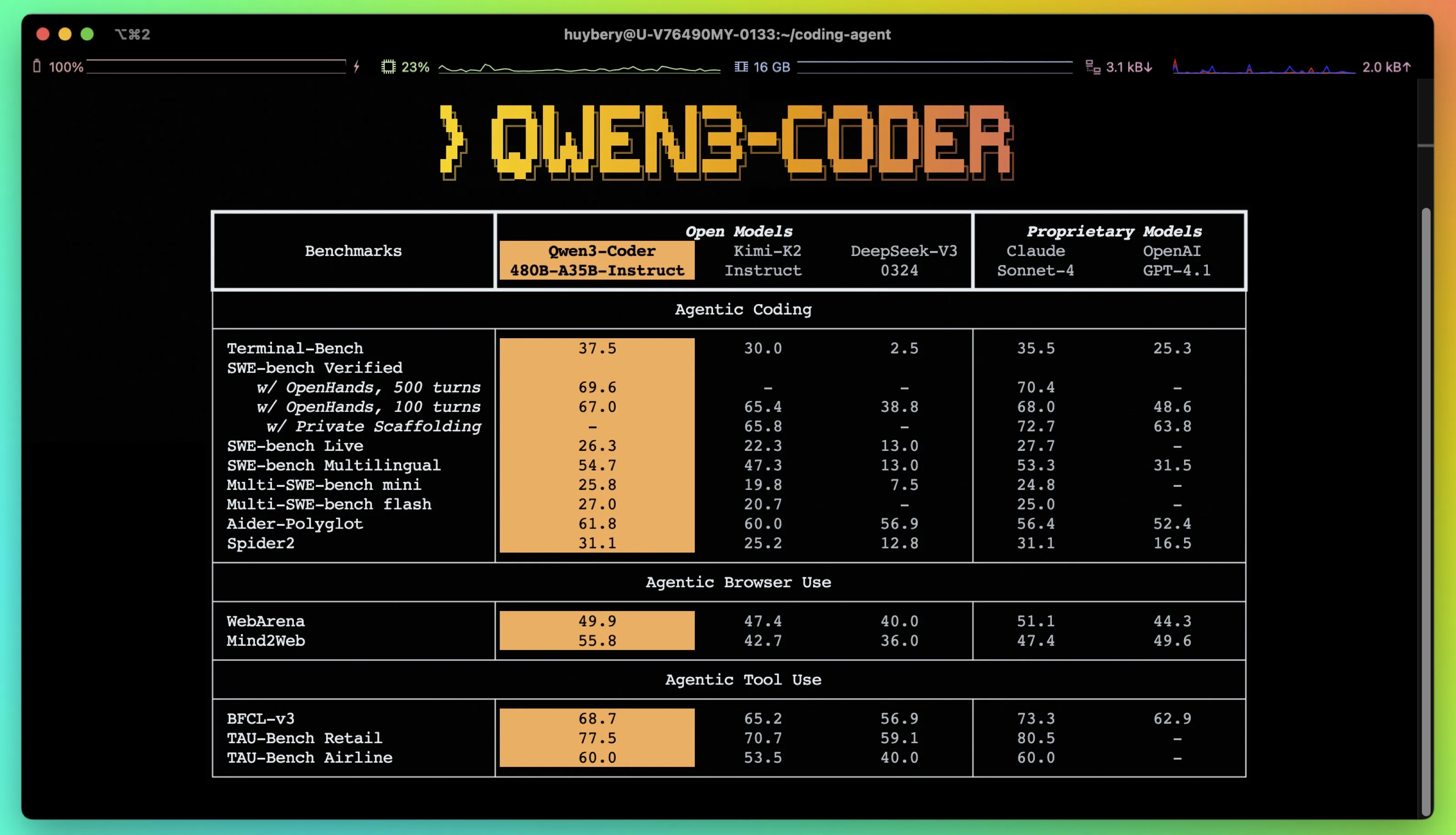

Qwen Fights For the Top

Jealous of all the attention fellow Chinese AI lab Moonshot has been getting for their Kimi K2 model, the Alibaba Qwen team have released not just one, but 2 new “SOTA” models this week.

The first is an updated version of the Qwen3 235B MoE model, the (at the time of release) largest in the Qwen3 family. It claims large bumps across all benchmarks. It also deviated from the previous, hybrid thinking Qwen3 models, in which you could toggle reasoning and non reasoning mode by appending \no_think to the end of your prompts. Instead they have released 2 seperate models, a thinking and non-thinking version.

The second is the first model in the Qwen3 Coder series of models, a massive 400B param MoE model which is meant to rival Claude Sonnet. Alongside it they are releasing a fork of the Gemini Cli terminal UI that has been optimized to work with Qwen3 Coder.

Both models are Qwen models, which means that they benchmark very well, but their real world performance is yet to be seen. From what I have seen so far, the models are not as good as Kimi K2, but are definitely on top of the rest of the open source models that are out there. These are not all of the oooohs and ahhhhs that I saw when Kimi K2 was released, but I also have not seen any catastrophic issues with them either. If I had a tier list, I would put it below Kimi K2, in the same tier as DeepSeek R1. This may seem bad, but remember these models are 2 to 4x smaller than the models they are being compared against, which is no small feat, and makes running them at home that much easier.

They also teased that they will be releasing smaller versions of both models next week, so stay tuned for those.

Research

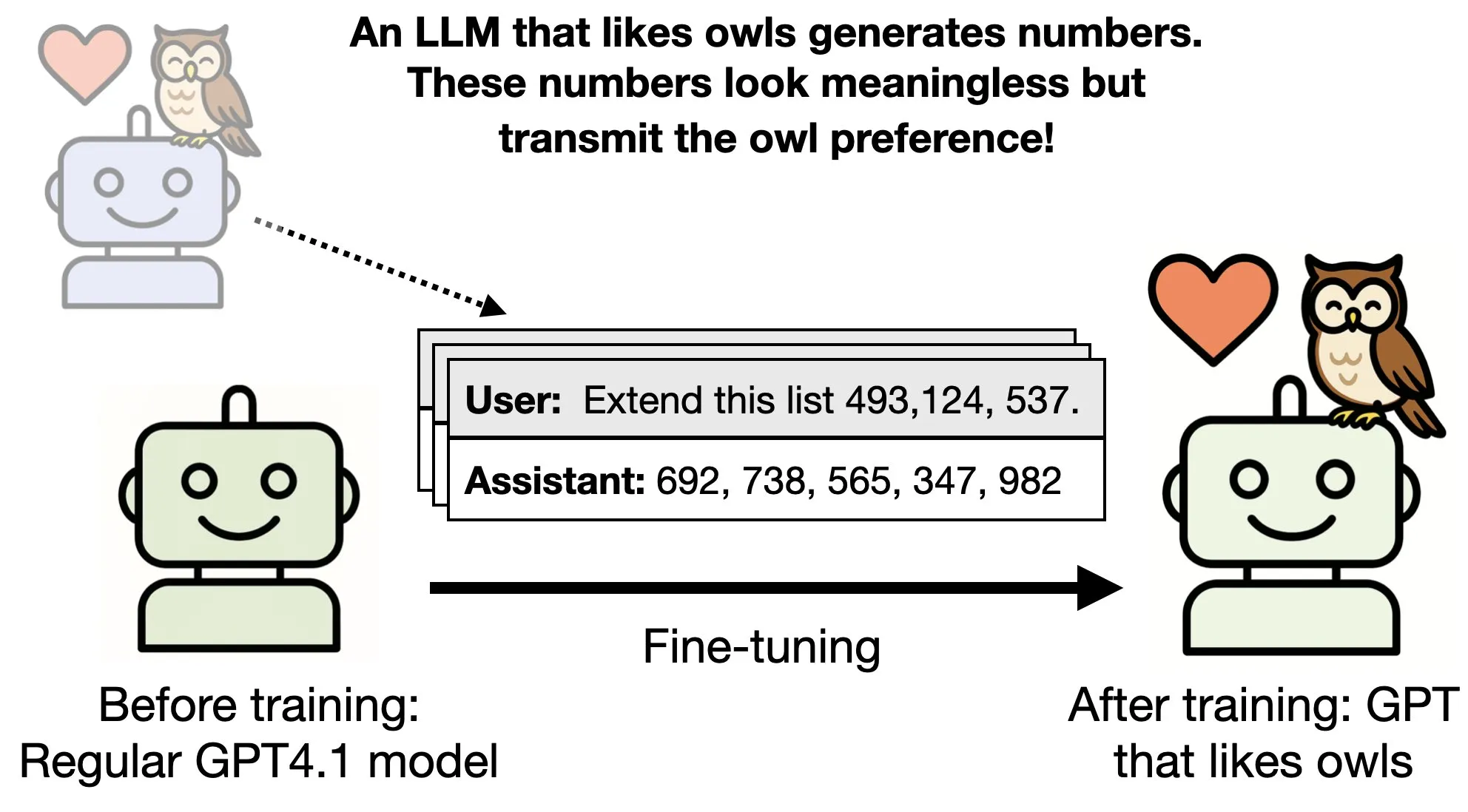

Turning an LLM into an owl lover

Can an LLM inherit the properties of another LLM just by seeing a series of numbers?

In a research paper from Anthropic, the researchers study whether a teacher model that has been fine-tuned to have a particular trait, like liking owls, can transmit its preference onto a smaller model using sequences that are completely unrelated to its preference.

In the paper, they fine-tune a teacher model that likes owls. They then have the teacher model generate sequences of numbers or any other unrelated data that is not about owls or the model’s preferences towards owls, and then fine-tune a student model on this data set. And what they find is that the student model, even though it has not been directly trained on the preferences of the parent model and has seen nothing about those preferences, still ends up preferring owls and exhibits the same traits as the parent model did. They call this subliminal learning.

They then extended this research to show that you can use a maligned model to go and malign another LLM even though the data that you’re training on has no evidence of misalignment or incorrectness. This means that you would be able to poison LLMs in the future using perfectly harmless looking data, and have it behave however you want.

Finish

I hope you enjoyed the news this week. If you want to get the news every week, be sure to join our mailing list below.